Paper: https://arxiv.org/abs/2411.16346 Abstract Notable progress has been made in generalist medical large language models across various healthcare areas. However, large-scale modeling of …

Recent Publications

A Foundation Model for Intensive Care: Unlocking Generalization across Tasks and Domains at Scale

(Best Paper Award at ML4H Findings Track and invited talk for AI in Medicine at BIFOLD Institute)

Manuel Burger, Daphné Chopard, Gregor Lichtner, Malte Londschien, Fedor Sergeev, Moritz Fuchs, Hugo Yèche, Rita Kuznetsova, Martin Faltys, Eike Gerdes, Polina Leshetkina, Micha Christ, Moritz Schanz, Nora Göbel, Peter Bühlmann, Elias Grünewald, Felix Balzer, Gunnar Rätsch

Manuel Burger, Daphné Chopard, Gregor Lichtner, Malte Londschien, Fedor Sergeev, Moritz Fuchs, Hugo Yèche, Rita Kuznetsova, Martin Faltys, Eike Gerdes, Polina Leshetkina, Micha Christ, Moritz Schanz, Nora Göbel, Peter Bühlmann, Elias Grünewald, Felix Balzer, Gunnar Rätsch

Working Paper on medRxiv

Domain generalization and adaptation in intensive care with anchor regression

Malte Londschien, Manuel Burger, Gunnar Rätsch, Peter Bühlmann

Malte Londschien, Manuel Burger, Gunnar Rätsch, Peter Bühlmann

RSS Data Science and Artificial Intelligence

Data-Driven Discovery of Feature Groups in Clinical Time Series

Fedor Sergeev, Manuel Burger, Polina Leshetkina, Vincent Fortuin, Gunnar Rätsch, Rita Kuznetsova

Fedor Sergeev, Manuel Burger, Polina Leshetkina, Vincent Fortuin, Gunnar Rätsch, Rita Kuznetsova

ML4H 2025 (PMLR)

Recent Posts

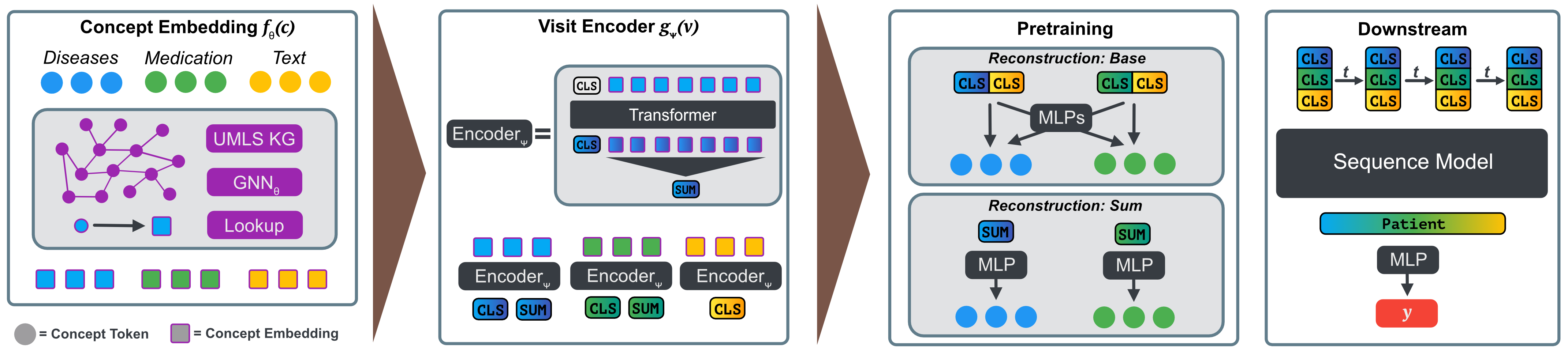

Clinicians are increasingly looking towards machine learning to gain insights about patient evolutions. We propose a novel approach named Multi-Modal UMLS Graph Learning (MMUGL) for learning meaningful representations of medical concepts using graph neural networks over knowledge graphs based on the unified medical language system. These representations are aggregated to represent entire patient visits and then fed into a sequence model to perform predictions at the granularity of multiple hospital visits of a patient. We improve performance by incorporating prior medical knowledge and considering multiple modalities. We compare our method to existing architectures proposed to learn representations at different granularities on the MIMIC-III dataset and show that our approach outperforms these methods. The results demonstrate the significance of multi-modal medical concept representations based on prior medical knowledge.

Recent Talks

Spotlight Talk

A Foundation Model for Intensive Care: Unlocking Generalization across Tasks and Domains at Scale

ML4H 2025

@

San Diego, USA

Dec 2025

Invited Talk

Scaling Critical Care AI: Towards Conversational Interactions with Patient Data

AI in Medicine Workshop

@

Charité Berlin and BIFOLD Institute, Berlin, Germany

Nov 2025

Spotlight Talk

Towards Foundation Models for Critical Care Time Series

AIM-FM Workshop

@

NeurIPS 2024, Vancouver, Canada

Dec 2024

Awards & Honors

Apple Scholar in AI/ML

Apple Scholar in AI/ML

@ Apple

2026 Apple Scholar in AI/ML fellowship to recognize outstanding research in AI/ML by early-career researchers.

Apr 2026

Best Paper

Best Paper Award

@ ML4H 2025

Best paper award for the findings track paper 'A Foundation Model for Intensive Care: Unlocking Generalization across Tasks and Domains at Scale'

Dec 2025

Best Paper

Best Paper Award

@ AIM-FM, NeurIPS 2024

Best paper award for the paper 'Towards Foundation Models for Critical Care Time Series'

Dec 2024